I’ve been building my own PC’s for nearly two decades now, and, as I’m sure many of you have experienced, once people know you can do something like that, you will forever and always be known as the “Computer Guy.” Prepare to be solving all your friends’ and family’s computer problems for the rest of your life. “With great power comes…,” I can’t make myself finish the line.

I’ve been building my own PC’s for nearly two decades now, and, as I’m sure many of you have experienced, once people know you can do something like that, you will forever and always be known as the “Computer Guy.” Prepare to be solving all your friends’ and family’s computer problems for the rest of your life. “With great power comes…,” I can’t make myself finish the line.

Anyway. I’m often asked, either by friends/family, or other Internet dwellers, just what is the best [Insert Product]. Depending on the person asking, and my level of patience that day, I either say “[Insert Product] is the best,” or I will give a real answer about the pros and cons of various technologies out there.

As anyone who has tried to thoughtfully answer that kind of question knows, there typically is no “best” product out there. The better question I’d like to hear people ask more often is, “What is the best [Insert Product] for doing [Insert Task].” However, even that question usually can’t be answered by just spouting off the current flavor-of-the-month recommendation. I’m usually asked these kinds of questions in regards to CPU’s, GPU’s, RAM, Motherboards, etc… you know, the guts of the machine. Occasionally, someone will ask about gaming mice/keyboards and headsets. Strangely, I’ve only been asked about monitors a handful of times. I say “Strangely” because buying the right monitor can be a little overwhelming if you know absolutely nothing about what you need. That said, I’m sure the reason many people overlook the question is because most monitors will work with just about any computer, and people often equate monitors to TVs. By that, I mean to say, they simply buy the biggest monitor they can afford with the best resolution and call it a day.

In the past, I suppose this buying strategy worked out just fine, even if I don’t recommend buying something that expensive without knowing what you’re getting first. These days, however, monitors are evolving so rapidly that the market is kind of saturated in acronyms and extra features that may or may not be worth the coin you’re dropping. So, let’s take a closer look at the world of monitors and maybe by the end I can help some folks have a better idea for what to be on the look out for… at least until the next evolution takes place.

Main Concerns When Buying

Okay, so, I’m going to have start this by reiterating that your main concerns when buying a new monitor depend ENTIRELY on what you want your machine to be doing. Seeing as how this is a site about games and gaming, I think it goes without saying that we’ll be focused on the gaming side of monitors.

Screen Size, Aspect Ratio, Resolution

Obviously, you don’t want to buy that beastly 34 inch monitor if it isn’t going to fit on your desk. No brainer here… make sure you’ve got the desk real estate to handle whatever you’re buying.

Obviously, you don’t want to buy that beastly 34 inch monitor if it isn’t going to fit on your desk. No brainer here… make sure you’ve got the desk real estate to handle whatever you’re buying.

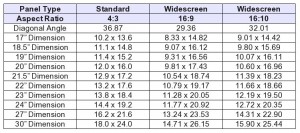

As gamers, the next thing we’re going to want to pay attention to is the monitor’s Aspect Ratio. Unsurprisingly, this can also affect the physical size of the monitor. So, don’t buy that 21:9 widescreen monster if it’s going to cause an issue with placement.

What is Aspect Ratio, you ask? It’s simply the relationship of the display’s width to its height. The most common aspect ratios are 16:9 and 21:9. Historically, 4:3 has been the standard, but it is being phased out and shouldn’t even be considered when shopping for a new gaming monitor. Similarly, 16:10 was popularized in the early 2000’s and then phased out for the 16:9 aspect ratio. However, with the popularization of tablets, 16:10 is seeing a bit of a resurgence, as it considered to be a more productivity oriented aspect ratio while 16:9 is more favored for media.

The new kid on the block is 21:9. This aspect ratio is your ultra-wide screen monitor that looks absurd when powered off and glorious when powered on and gaming.

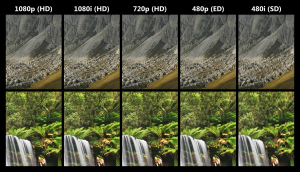

In terms of Screen Resolution, your aspect ratio will directly impact the kinds of common resolutions you’ll have available to you. The most common resolution, 1920×1080 is a 16:9 aspect ratio while the 16:10 “counterpart” is 1920×1200. It should be noted that these two resolutions are considered 1080p and thus FHD (Full High Definition)

The industry has flooded the market with all kinds of acronyms that each mean slightly different things, for instance: HD, FHD, QHD, and UHD are all varieties of High Definition, but can make a dramatic impact on your choice of monitor.

Typically this is what the acronyms usually mean:

HD (High Definition)

HD (High Definition)

- Is often used by itself to indicate all of the other types of High Definition displays. So, when reading about a Monitor that is HD, make sure and clarify just what kind of resolutions it can handle. If it can only handle 720p (1280×720) then this indicates that it is a 16:9 aspect ratio monitor that can only handle the minimum resolution to be considered High Definition.

- FHD (Full High Definition)

- The term Full High Definition is often used to indicate that the monitor supports 1080p (or 1080i which is misleading) resolution which is considered the “Real Deal” when it comes to HD quality.

- The difference between 1080p and 1080i is a technical issue that deals with interlaced versus progressive scan “drawing” of frames on the screen. Google it if you want specifics. Suffice to say that 1080i is considered inferior and NOT true HD quality.

- QHD (Quad High Definition)

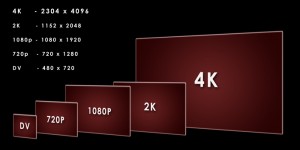

- QHD (not to be confused with qHD) is a 2560×1440 resolution for the 16:9 aspect ratio monitor or 3440×1440 for 21:9 ultra-wide screen monitors. This is considered a 1440p resolution that is also referred to as WQHD sometimes. After 1080p was popularized, 1440p was seen as the next logical step. However, it was shelved as the advent of 4K monitors became more popular.

- UHD (Ultra High Definition) – 4K

- Currently the industry is pushing 4K resolution (4096×2160) as the new target for all things HD in the 16:9 aspect ratio field.

- However, the term UHD can also refer to resolutions of 3840×2160. This isn’t technically a 4k resolution. So, when seeing UHD monitors, again, make sure that you know exactly what resolution you’re buying.

These are the most common acronyms, but you could run into others that mean slightly different things.

Such as the term Quad Full HD which is another term for UHD and may or may not actually mean you’re getting a 4K resolution.

LCD or LED?

More acronyms. Yay. Thankfully these descriptors are a bit more straight forward to understand. The first thing you need to understand is that an LED display IS an LCD display. Yep. The difference here is that LED displays use… LED’s for backlighting, while LCD displays typically use cold cathode fluorescent lamps (CCFL) to provide backlighting.

More acronyms. Yay. Thankfully these descriptors are a bit more straight forward to understand. The first thing you need to understand is that an LED display IS an LCD display. Yep. The difference here is that LED displays use… LED’s for backlighting, while LCD displays typically use cold cathode fluorescent lamps (CCFL) to provide backlighting.

So… what on Earth does that mean in practical terms? LED displays are usually thinner, consume less power, and provide better black levels and contrast. In short… buy LED if you can. For gaming, having blacks and proper contrast colors can literally change a game’s nighttime landscape. That sniper that just obliterated you from the cover of darkness? Yeah, that one you didn’t even see? If you had proper black levels and contrast there’s a good chance you would have seen him skulking about.

Panel Type

When it comes to LCD displays people often overlook the panel type. The two most common types you’ll likely see are Twisted Nematic (TN) or In-Plane Switching (IPS). TN panels are usually cheaper, but you get what you pay for as IPS displays usually have better performance. TN panels have color limitation problems thanks to dithering which can also affect image sharpness, while IPS displays don’t use dithering and can therefore get a wider range of colors without losing image clarity.

As you can see, there are a plethora of options available to you when you’re looking for a new monitor. In part 2, we’ll take a final look at some of the remaining important factors to consider when shopping around.